Is Google Targeting LLM-Focused Self-Promotional Content?

There is a real tension building right now between two things a lot of publishers want at the same time: visibility in AI tools like ChatGPT and Perplexity, and strong rankings in Google’s organic search results. For a while, some companies thought they could chase both goals with the same content. That approach is starting to look increasingly risky, and the SEO community has been making a lot of noise about it lately.

This article breaks down what’s happening, who’s getting hit, why Google is likely targeting this type of content, and what publishers should actually do about it going forward.

What Are LLM-Focused Self-Promotional Listicles?

Before getting into the data and the names, it’s worth explaining exactly what we’re talking about here, because this tactic has a very specific shape.

An LLM-focused self-promotional listicle is an article structured around a premise like “the best tools for X” or “top platforms for Y,” where the company publishing the article also happens to be one of the products on the list, usually near or at the top. The content isn’t written primarily to help a human reader make an informed decision. It’s written to get cited by AI answer engines.

This is where AEO comes in. AEO stands for Answer Engine Optimization, and it refers to the practice of structuring content so that AI tools are more likely to pull from it when answering user queries. Instead of optimizing for a Google ranking, you’re optimizing to become a source that ChatGPT, Gemini, or Perplexity excerpt when someone asks a relevant question. Companies have been pouring resources into this because AI-generated answers increasingly influence purchasing decisions, and showing up in those answers can drive real traffic and brand recognition.

The problem is what the content looks like in practice. These articles typically have thin supporting copy around each list item, a templated structure repeated across dozens or hundreds of pages, heavy internal linking designed to push authority toward the publisher’s own product pages, and brand mentions that are disproportionately weighted toward whoever is publishing the piece. When you scale this kind of content with AI writing tools, the output compounds quickly. A team can go from ten of these articles to five hundred in a matter of weeks.

And it’s not just listicles. Thin LLM-targeting content shows up in other formats too: comparison pages, FAQ clusters, “what is” definitional pages, and tool roundups all follow the same basic logic. The listicle is just the most obvious and most documented version of the problem.

Why SEO Link Builders and Content Teams Are Paying Attention

These days SEO link builders and content strategists have been watching this pattern closely because the sites getting hit aren’t small blogs running sketchy tactics. These are established publishers, and in some cases, major enterprise companies. When sites with real domain authority and dedicated SEO resources start dropping in organic visibility because of a content strategy they were publicly promoting, it sends a signal to the entire industry.

The chatter has picked up significantly in early 2026, with several well-known voices in the SEO community independently documenting the same pattern across different site profiles.

Who Is Reporting This and What Are They Seeing?

The first notable coverage came from Barry Schwartz, a web technologist and the lead reporter at Search Engine Roundtable, who covered this trend on February 4th, 2026. Schwartz has been tracking Google algorithm changes for years and is generally one of the first people to document shifts in organic visibility at scale.

Shortly after, Lily Ray, an SEO analyst known for her deep work on Google’s algorithm updates, noted that she had compiled a list of approximately 30 sites fitting this exact profile, all showing significant drops in organic visibility. Of course, others pointed out how Lily was wrong about her analysis. Ray has been one of the more consistent voices on the Helpful Content Update and its successors, so her involvement in documenting this wave is significant.

Glenn Gabe, who has spent years building detailed before-and-after datasets on sites affected by Google’s core and helpful content updates, also corroborated the February wave. Gabe’s work tends to be particularly useful because he tracks recovery patterns, not just the initial drops.

More recently, Gagan Ghotra posted about enterprise companies that have been scaling content with LLM-focused production workflows and seeing the organic consequences. His commentary called out Webflow specifically, noting the company had been very public about its AEO content strategy. Webflow is a legitimate, well-resourced company, which is exactly what makes the callout interesting. This isn’t a case of some fly-by-night content farm getting penalized. It’s a company with brand recognition and a content team that made a strategic decision and is now dealing with the fallout in its organic numbers.

What makes this more than just anecdotal grumbling is the convergence. You have multiple credible, independent researchers seeing the same pattern at roughly the same time, across a wide enough sample of sites that it’s difficult to write off as coincidence.

This Isn’t Google’s First Move Against Thin Content

It would be a mistake to treat this as a brand new development. Google has been steadily expanding its enforcement against content that prioritizes search engine signals over genuine reader value for several years.

The Helpful Content Update, which Google first rolled out in August 2022, was the clearest public signal that Google was changing how it evaluated content quality. The system was designed to identify and demote content written for search engines rather than people. At the time, it created measurable disruption across affiliate publishing and information-heavy sites.

The September 2023 Helpful Content Update was significantly more aggressive. It hit large swaths of the publishing world hard, and many sites that were affected have still not fully recovered. Google has confirmed that recovery from HCU-related drops often only comes with subsequent core updates, not through continuous real-time re-evaluation.

Then came the March 2024 Core Update, which rolled out alongside new spam policies that explicitly targeted scaled content production. Google was direct about the fact that this update was designed to catch content created in large volumes through automated or semi-automated means, without genuine human editorial judgment applied to individual pieces.

The LLM-targeting content wave is the next iteration of the same ongoing enforcement cycle. The specific format is new. The underlying logic, that Google will eventually find and demote content written to manipulate its systems rather than serve readers, is not new at all.

What is different this time is speed. AI writing tools have made it possible to produce this kind of content at a scale that would have been logistically impossible three years ago. That acceleration likely compresses Google’s timeline for algorithmic response, because the signal-to-noise ratio in the index degrades faster when bad content can be produced in hours instead of weeks.

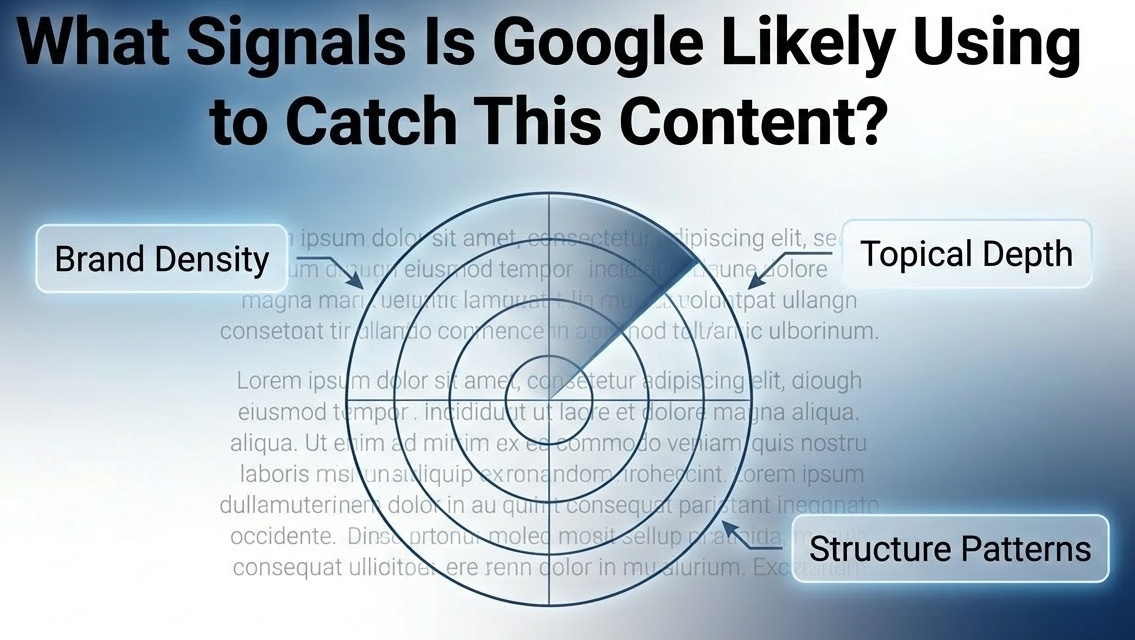

What Signals Is Google Likely Using to Catch This Content?

Google hasn’t published a technical breakdown of exactly how it identifies LLM-focused self-promotional content, so what follows is analytical inference rather than confirmed documentation. But based on what’s publicly known about how Google’s systems work and what the affected sites have in common, several likely signals stand out.

Brand mention density is probably one of the cleaner signals. When the publisher is also one of the products being recommended, and that product appears at or near the top of multiple listicles across the site, the self-referential pattern is mathematically detectable.

Topical shallowness is another factor. These articles tend to cover a subject at keyword depth without demonstrating any genuine expertise or original perspective. A human reader who already knows the topic would find nothing new. A human reader who doesn’t know the topic would finish the article without really understanding it.

Templated structure at scale is also telling. When a large number of pages on a site share the same structural fingerprint, including the same approximate word count, the same heading patterns, and the same content organization, it suggests programmatic or semi-programmatic production. Google has gotten better at identifying this pattern, and the March 2024 spam policies were at least partially aimed at exactly this kind of scaled templating.

User engagement signals likely play a role as well. Content that doesn’t deliver genuine value to a human reader tends to produce poor dwell time and high bounce rates, which feed back into how Google evaluates quality over time.

Finally, Google’s understanding of entity relationships has improved considerably. The ability to identify that the publisher of a “best tools” article is the same entity as the product being recommended, and to weight that relationship when evaluating the content’s credibility, is well within what Google’s current systems can do.

The Real Tension: LLM Visibility vs. Organic Search Performance

This is the part of the conversation that most coverage glosses over, and it’s actually the most important part for publishers trying to figure out what to do.

The tactics that improve your chances of being cited by AI answer engines and the qualities that Google’s organic algorithm rewards are not the same thing. In many cases, they actively work against each other.

AI tools appear to favor content that is structured, assertive, and easy to excerpt. Clean declarative sentences, clear list formatting, and definitive claims make it easier for an LLM to pull a passage and surface it in response to a user query. That’s why the listicle format is so appealing for AEO. It’s modular. Each item can stand alone as a quotable unit.

Google’s organic algorithm, on the other hand, rewards depth, original perspective, demonstrated expertise, and content that earns genuine engagement from readers. The kind of content that ranks well in Google tends to be harder to excerpt cleanly because it’s contextual, nuanced, and built around a coherent argument rather than a set of standalone items.

When you optimize aggressively for LLM citation, you often end up stripping out exactly the qualities that Google uses to identify high-quality content. You’re making the content more modular and more declarative, which serves AI tools, while making it thinner and less distinctive, which hurts organic performance.

The short-term results of AEO-focused content can look genuinely good. Publishers who moved early on this tactic saw real AI visibility gains. The problem, as the current wave of organic drops illustrates, is that the organic consequences catch up eventually. These sites hit a wall in Google’s results, and because so much of their content was built around the AEO format, the hit is broad rather than isolated.

The honest answer to whether you can serve both masters at once is: sometimes, but not through the same content. A well-researched, deeply reported article can earn both organic rankings and AI citations. A templated listicle written to manufacture AI visibility almost certainly cannot do both for long.

What the Data Says About Recovery

Recovery data on this specific wave is still limited because much of it is recent. But the precedent from earlier rounds of Google’s content quality enforcement is not encouraging for fast recovery expectations.

Sites that were hit by the September 2023 Helpful Content Update are still, in many cases, struggling to regain their previous organic visibility. Google has confirmed that HCU recoveries tend to happen at core update intervals, not continuously, which means a site hit in one cycle may need to wait months for the next core update to register any improvements it has made.

The implication for publishers who are already seeing drops from this current wave is that fixing the content quickly does not necessarily mean recovering quickly. The timeline between cleanup and algorithmic credit is long and inconsistent. That’s a meaningful business risk, particularly for publishers whose organic traffic is a primary revenue driver.

For sites that haven’t been hit yet but are running similar content strategies, that same lag works in their favor as a reason not to wait. The longer this content lives on a site, the deeper the algorithmic signal accumulates.

What Publishers Should Actually Do

If your site has already been affected, the first step is a content audit focused specifically on the characteristics described above. Look for pages with high self-promotional density, templated structure, and thin supporting copy. These are the pages most likely driving the algorithmic signal, and they need to be either substantially rewritten or removed. Waiting for a manual action notice is not a useful strategy here. Algorithmic hits of this type don’t come with warnings.

If you’re considering scaling LLM-targeting content, the distinction that matters is whether your product is a legitimate part of the answer to the question you’re writing about. There’s a real difference between an article that genuinely compares tools in your category and gives your product a fair and honest position in that comparison, and an article that exists primarily to manufacture a citation opportunity. Google’s systems are getting better at detecting that distinction, and so are readers.

For enterprise content teams specifically, the risk profile has changed. This is no longer a fringe SEO debate. It’s a documented pattern with named companies and measurable traffic drops. If your content strategy is built around LLM-targeting at scale, you need a clear channel diversification plan that doesn’t assume organic search and AI visibility will move together, because they increasingly don’t.

Where This Is Headed

Google has a consistent track record of expanding its enforcement perimeter as new forms of content manipulation become widespread. The Helpful Content Update started narrow and got broader with each iteration. The spam policies have expanded year over year. There’s no reason to expect the response to LLM-focused thin content to follow a different pattern.

The publishers who will come out of this period in the best position are those treating organic search and AI visibility as distinct channels with distinct content requirements. Organic search rewards depth and genuine expertise. AI citation rewards structure and clarity. The best content earns both, but it earns them because it’s actually good, not because it was engineered to trigger a specific algorithmic response.